Abstract: In this paper we explore the nature of embodied interaction and play within the context of designing an interactive art system for movement rehabilitation.We discuss the nature of embodiment in two primary ways: (i) interactive new media art works that support embodiment and that encourage a seamless form of interaction between the actor and their ambient environment, and (ii) the nature of embodied interaction in human computer interface design. Within this framework we describe the development of our multi-modal interactive artwork called Elements. The Elements system aims to aid clinicians rehabilitate upper limb movement in patients with Traumatic Brain Injury (TBI). We also discuss the user’s experience of the system and its potential as a mode of therapy.

Introduction: Traumatic Brain Injury & Rehabilitation

When I think of my body and ask what it does to earn that name, two things stand out. It moves. It feels. In fact, it does both at the same time. It moves as it feels, and it feels itself moving. Can we think of a body without this: an intrinsic connection between movement and sensation whereby each immediately summons the other? (Massumi, 2002: 1)

Many people with Traumatic Brain Injury (TBI) endure a disconnected and fragmented experience of the body as a consequence of their accident or injury. The symptoms of TBI patients vary tremendously according to our collaborators at the Epworth Hospital, Melbourne. The therapists have described an extreme range of symptoms experienced by patients ranging from sensations of movement such as perpetual free fall when sitting still, to nausea triggered by patterns printed on the therapist’s clothes, and more general difficulties planning and initiating actions.

These symptoms, among many others, often lead to a significant incidence of depression among people with physical and intellectual disabilities, which presents a psychological barrier to engaging in rehabilitation and daily living (Esbenson et al, 2003; Shum et al, 1999). Patient engagement is one of the key elements to maintaining motivation in rehabilitation therapy. The importance of maximising the engagement of these people in relevant and pleasurable activities can compliment existing, often tedious, approaches to rehabilitation. In recent years, there has been considerable interest in combining media art and associated interactive technology as a means to engage people in physical therapy (Brooks et al, 2004; Reid, 2002). The use of interactive multimedia environments holds great potential to augment physical awareness and recovery in the rehabilitation of people with sensory and physical impairments (Rizzo et al, 2004). The use of interactive media technology in neuro-rehabilitation may hold the potential to assist TBI patients regain basic functional skills (Holden, 2005). Indeed, the ability to enhance rehabilitative processes in the early stages following TBI is one of the great challenges for therapists.

TBI represents a significant health issue for Australians. Based on figures published by the Australian Institute of Health and Welfare, approximately 400,000 people suffer from a life-long debilitating loss of function as a result of some form of brain injury (Fortune et al, 1999). TBI refers to a cerebral injury caused by a sudden external physical force. Such physical trauma can lead to a variety of physical, cognitive, emotional, and behavioural deficits that may have long-lasting and have devastating consequences for the victims and their families.

After injury, movement performance in TBI sufferers is constrained by a number of physiological and biomechanical factors including the increase in muscle tone that occurs as a result of spasticity, reduced strength of (muscle) prime movers, and limited coordination of body movement. More holistically, the patient’s sense of position in space – their sense of embodiment or body schema – is severely compromised. Under normal circumstances, information from the different sensory modalities is correlated in a seamless manner by the mobile performer; our sense of changes in the flow of visual input, for example, is associated with the rate of change in bodily movement (viz kinaesthesis). In TBI, the main streams of sensory information that contribute to their sense of embodiment (visual, auditory, tactile, and somatic) are fragmented. In order to rebuild body sense and the ability to effect action, the damaged motor system must receive varied but correlated forms of sensory input during the early phase of recovery; this is seen to maximise the opportunity for synaptic regrowth and retuning. For example, simple forms of augmented feedback (e.g., visual analogue displays of limb position from different viewpoints) have been shown to support re-training of basic movement patterns in TBI (Gaggioli et al. 2006). A simple control parameter that underpins this form of recovery is repeated practice (Platz et al., 1999). Indeed, one of the major impediments to recovery is the limitations of a patient’s ability to engage in the therapeutic regime and to persist with it. This underlines the importance of presenting therapeutic tasks and environments in a meaningful and stimulating way, and in ways that reinforce the ordered relationship between the sensory world, motor commands, and the predictable affects of those commands on one’s body.

As recently as the 1960s, neuroscientists believed the brain to be “hard-wired” and largely immutable by the time a person reached early adulthood. They held that any structural damage to the brain was untreatable (Rose, 1996). This commonly held view was challenged in the 1970s when scientists began to investigate the concept of “neuroplasticity”, a term that refers to changes in the organization of the brain as a result of experience. Evidence began to accumulate that suggested brain activity associated with given functions, such as limb movement, memory and learning, could move to a different location in the brain as a consequence of normal experience or due to brain damage and recovery. Thus, neuroplasticity became a fundamental scientific finding that supported treatment of many forms of acquired brain injury previously considered untreatable. The brain can metaphorically “re-wire” itself by creating new nerve cells and reorganise synaptic pathways around damaged brain tissue. Indeed, evidence of plasticity has been found extended well into the adult years, and for many years after brain damage in various cases and conditions. In the particular case of TBI, it is perhaps more correct to say that plasticity is greatest in the first 6 to 12 months, post-injury, but still evident in a reduced form thereafter. These advances in neuroscientific knowledge open up new and exciting possibilities for the deployment of interactive media in rehabilitative treatments.

Media philosopher Marshall McLuhan discussed how electronic media impacts us at a social, psychological, and sensorial level. McLuhan suggested that each new medium creates a unique form of awareness in which some senses are heightened and others diminished (McLuhan and Zingrone, 1995). His fist law of media is that all the media are extensions of aspects of humanity. McLuhan argued that each medium has the potential to reorganize and extend our senses in its unique way. Electronic media extends our nervous system, telephony extends our hearing, the television camera extends the eye and sight, the computer extends the processing and storage capacities of our central nervous system. Moreover, the consequences of these sensorial reorganizations were seen as far more significant than the content itself. The inference is that thematic and other content are almost secondary to the more far-reaching effects that the type of media has on the way we process information generally. This insight is consistent with neuroscientific understanding of how our mind and brain can change with sensory experience. More specifically, screen-based media provides many instances whereby our sensory perceptions is altered and enhanced. For example, numerous studies reported by Shawn Green and Daphne Bavelier suggest that playing interactive computer games has profound effects on neuroplasticity and learning (Green and Bavalier, 2004). Computer games have been shown to increase perception and cognition in gamers compared with non-gamers by heightening spatial and sensory motor skills. These improvements could generalise to a number of real world scenarios, e.g., improved response time when driving a car, or faster performance in sport. The practical therapeutic uses of interactive computer games could be numerous, particularly when in service of individuals with diminished movement and cognitive function. In this regard, interactive media art environments that support an embodied view of performance and play are of particular interest.

Embodied Cognition and Interaction

The work of artist and technologist Myron Krueger provides us with an early example of embodied performance and play in media art through his work VIDEOPLACE. (Krueger, 1991: 33-64) Furthermore, Krueger intuitively speculated that this particular work could be used in the service of TBI movement rehabilitation. (ibid: 197-198) Krueger developed a computer vision system as a user interface for VIDEOPLACE. This interface could be programmed to be aware of the space surrounding the user and respond to their behaviour in a seamless manner. Participants realised that they could move virtual objects around the screen, change the objects’ colours, and generate electronic sounds simply by changing their gesture, posture and expression to interact with the on-screen graphic objects. Here Krueger explored embodiment between people and machines, by focusing his artwork on the human experience of interaction and of the interactions afforded by the environment itself.

“Embodiment” concerns the reciprocal relationship that exists between mind, biology and the environment. The term “embodied cognition” is used to capture this seamless relationship between performer, task at hand, and the environment (Wilson et al, 2006). Put simply, the way we process information and make sense of the world is affected by our body. A mental construct or concept gains structure from the experiences that gave rise to it (Mandler, 1992: 596). For example, there is much work from the cognitive sciences that show how spatial and even linguistic concepts are assembled from action or draw meaning by virtue of being grounded by the moving and feeling body. (Barsalou and Kaschak, 2002; Glenberg, 2008). For example, terms like “feeling down”, “on top of the world”, and “behind the eight-ball” are all seem to be derived from our previous experience of real-world interactions with objects.

This embodied view of human performance is consistent with trends in Human Computer Interaction (HCI) design (e.g., Dourish, 2001; Ishii et al. 1997). They argue that the basis of interaction design should focus on first-person, lived, body experience and its relation to the environment. Embodied phenomena are the ones we encounter directly rather than abstractly, occurring in real time and real space. We inhabit our bodies and they in turn inhabit the world, with seamless connection back and forth (Dourish, 2001: 101-102).The HCI approach to interaction design capitalises on our physical skills and our familiarity with real-world objects. Tangible user interfaces (TUIs), for instance, aim to exploit a multitude of human sensory channels otherwise neglected in conventional interfaces and can promote rich and dexterous interaction (Ishii et al, 1997). TUIs are physical objects that may be used to represent, control and manipulate computer environments. This represented a major transition of HCI from the Graphical User Interface (GUI) paradigm of desktop computers to TUIs that transform the physical world of the user into a computer interface. For example, the Nintendo Wii Remote Controller could be considered a TUI.[1]

As reported elsewhere (Wilson et al, 2007), our conceptual approach combines (ecological)[2] motor learning theory with an embodied view of interaction design to inform the way we conceive of the relationship between performer and workspace. The concept of ‘affordance’ proposed by ecological theorist William Gibson (Gibson, 1966) is of particular relevance to our approach. “Affordance” refers to the opportunities for interaction that meaningful objects provide in our immediate environment and in relation to our sensorimotor capacities. The perceptual properties of different objects and events are, thus, mapped fairly directly to the action systems of the performer (Garbarini, 2004). The affordances offered by TUIs have been designed to engage the patient’s attention with the movement context and the immediate possibilities for action. So, rather than embedding virtual objects in a virtual world, we use real objects and a direct mode of interaction. The ecological approach has a lot to commend itself by not drawing an artificial distinction between the performer and the natural constraints of his performance.

Elements: a system for art and play

TBI patients frequently exhibit impaired upper limb function including reduced range of motion, impaired accuracy of reaching, inability to grasp and lift objects or perform fine motor movements (McCrea, 2004). The Elements system responds to this level of disability by providing patients with an “intuitive” desktop workspace that affords basic gestural control.

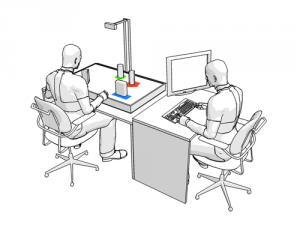

The Elements system comprises of a desktop display that utilises a computer vision system to track the patient’s arm gestures whilst they are using TUIs (figure 1). The TUIs are soft graspable interfaces that incorporate low cost sensor technology to augment feedback that, in turn, mediates the form of interaction between performer and the environment. This combination of 3D non-invasive technology was developed to empower TBI adults with moderate or severe movement disabilities. Audiovisual feedback can be used by patients to refine their gestural movements online and over time. In addition, patients can manipulate the feedback to create unique audiovisual outcomes. In short, the system design provides tactility, texture, and audiovisual feedback to entice patients to explore their own movement capabilities in externally directed and self-directed ways.

Our design is sensitive to the patient’s sense of embodiment and how the environment might be presented to afford new opportunities for action and play. Our aim is to provide an interaction aesthetic that is coupled to the individual’s perceptual and motor capabilities, building a durable sense of agency. Elements provides this by combining variable degrees of audiovisual feedback with the underlying forms of user interaction that provide patients with the opportunity to alter the aesthetics in real time.

There are two main aesthetic modes of user interaction that exploit the potential of the Elements system. Each of these modes encourages a different aesthetic style of user interaction and, consequently, has different application potential. The first aesthetic mode of user interaction presents a task-driven computer game of varying complexity that addresses the competence level of the patient. In this task, a patient places a TUI on a series of moving targets (image1). The accuracy, efficiency, proximity, and placement of the TUI are reinforced via augmented audiovisual feedback. The patient can review their performance and test scores as the therapy progresses over time. The objectives of these activities support the participant’s perception of progress and improvement, and this encourages self-competitive engagement. In other words, the patient perseveres and strives to improve their performance scores over time.

Figure 1 Illustration of prototype workspace

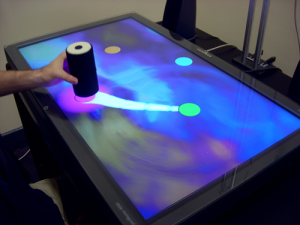

The second mode of user interaction is a suite of abstract tools for composing with sounds and visual feedback that promotes artistic activity. In these environments there are no set objectives. The participant derives engagement from having the power to create something whilst interacting with the work. For example, in one environment the patient might feel pleasure from being able to mix and manipulate sound samples in an aesthetically pleasing way. There is a broad range of experiential outcomes possible in each of the exploratory Elements environments. We describe these qualities of the experience as “emergent play” through creative and improvisational interaction. Painting and sound mixing is expressed through the patient’s upper limb control of the TUIs.

Image 1. Patient places a TUI onto a series of moving targets.

The components of the suite of exploratory environments are called mixer, squiggles and swarm. The mode of user interaction for each environment is designed to challenge the patients’ physical and cognitive abilities, motor planning, and to provoke their interest in practicing otherwise limited movement skills. Patients are given full control to play and explore, allowing them to discover how the environment is responding to their movement.

We use curiosity as an additional characteristic of play to motivate and engage patients with the Elements environments. According to Thomas Malone (Malone et al, 1987) curiosity is one of the major characteristics that motivate users to learn more in order to make their cognitive structures better formed. A learner’s curiosity can enable them to explore and discover relationships between their interactions and the feedback produced by the environment. Through playful interaction, users can seek out and create new sounds and visual features, exploring their combined effects. By doing so, patients may discover new ways of relating to their body and relearn their upper limb movement capabilities in a self-directed fashion.

A video of the Elements system can be accessed at http://www.youtube.com/watch?v=svXT16txEbg

Mixer

Image 2: Patient moves TUI to activate and mix sounds.

Participants use the mixer task to compose musical soundtracks by activating nine preconfigured audio effects. Placing a single TUI on any of the nine circular targets displayed on the screen activates a unique sound (image 2). Sliding the user interface across the target controls the audio pitch and volume of each sound effect. The relationship between the proximity of the TUI to the centre of the target increases the pitch and volume. The sound can be set to play the desired volume and pitch level when the TUI is lifted away from the target. In this way participants can activate and deactivate multiple sounds for simultaneous playback.

Squiggles

Image 3: Patient moves multiple TUI’s to draw lines and shapes.

The squiggles task encourages participants to draw paint like lines and shapes on the display using a combination of four TUIs (image 3). Each TUI creates a unique colour, texture and musical sound when moved across the screen. The painted shape appears to come to life once drawn. This animation is a replay of the original gesture thus reinforcing the movement used to create it. The immediacy of drawing combined with the musical feedback enables participants to create animated patterns, shapes, words and characters.

Swarm

Image 4: Patient moves multiple TUI’s to create audiovisual compositions

The swarm task encourages dual hand control (single hand is possible) to explore the audiovisual relationship between the four different TUIs. When placed on the screen, multiple coloured shapes slowly gravitate toward, and swarm, around the base of each TUI (image 4). As each interface is moved its swarm follows. The movement, colour, size and sound characteristics of each swarm changes when the proximity between the TUIs is altered. The relationship between the objects encourages participants to create unique audiovisual compositions by continually moving the TUIs across the screen.

User Experience and Conclusions

Thirteen patients with TBI have now been introduced to the Elements system and undergone a course of therapy at the Epworth Hospital in Melbourne, Australia. The goal of our clinical studies has been to evaluate the usability of the system from the patient’s perspective, its aesthetic appeal, their level of engagement with it, and the extent to which movement skills can be enhanced using our interactive environment. We have combined qualitative (or self-report) and quantitative approaches to assessment. Of the former, all of the patients expressed a desire to interact with the system in a creative capacity and have shown increased levels of motivation, engagement and enjoyment whilst undertaking the study. They identify that the system is intuitive to use and that the therapy, particularly the exploratory environments, represents a fun diversion from the normal rigours of their physical therapy in rehabilitation (Duckworth et al, 2008). The relatively young patients have responded well to the technology and to the aesthetic of the therapeutic environments, which are far removed from their normal experience in rehabilitation. As well, our hard data show significant improvements in movement accuracy, efficiency, and attention to task. These results suggest that creative and game style applications tailored for TBI patients may improve their motor skills and sense of agency and control. These environments may be a means to improve their quality of life in general, as a sense of agency is intimately entwined with one’s sense of purpose, achievement and happiness. Furthermore, it may be possible to tailor the system for a broader spectrum of people with mobility impairments.

Our approach of combining embodied interaction design with computer-mediated art has been successful, exceeding our expectations both in terms of its therapeutic effect and user engagement. The two aesthetic modes of user interaction provide the patient with many options for movement, ranging from the clear goals of the game-like tasks to the ambiguity of the exploratory artistic environments. The provision of audiovisual feedback was seen to augment the relationship between the moving body and its affects on the environment. In other words, the feedback enabled the user to better predict the changing flow of sensory information that occurred as a result of their movement; this is regarded as a vital aspect of movement control (Garbarini, 2004). As well, the exploratory workspaces tended to heighten the user’s sense of agency as their early tentative explorations saw perceptual events that were at once curious and compelling. The aesthetic seemed to draw the user into the space, encouraging a cycle of further exploration and play. In short, by not making the relationship between movement and its effects as obvious and by removing explicit goals, playful interaction was afforded.

The overall success of this strategy raises many intriguing questions for future work. The observed transformative effects of patients using the Elements system opens up avenues for further scientific thought and artistic practice. Not least of these are the moral and ethical implications for patients and therapists around the marriage of art and technology. For example, how do we deal with the notion that screen-based works may potentially facilitate physical changes at a neuronal level beyond the behavioural and performance changes that we observed? Moreover, how can interactive environments that promote creativity be primed and harnessed to stimulate the learning of movement over time? There are surprisingly few applied computer-mediated artistic interventions that combine embodiment and play as a source for hospital-based rehabilitation. TBI patients are a new audience for media artists to consider. Our work shows that the reciprocal role of new media art and health science in developing therapeutic applications is rich with future possibilities.

Acknowledgements

This work was supported in part by an Australian Research Council (ARC) Linkage Grant LP0562622, and Synapse Grant awarded by the Australian Council for the Arts. The authors also wish to acknowledge the assistance of Ross Eldridge – PhD candidate, School of Electrical and Computer Engineering, RMIT University; Nicholas Mumford – PhD candidate, Division of Psychology, RMIT University; computer programmer Raymond Lam; Patrick Thomas and David Shum, Griffith University; and Dr Gavin Williams PhD, Senior Physiotherapist at the Epworth Hospital, Melbourne, Australia.

Biographies

Jonathan Duckworth is an artist, designer and founder of new media practice ZedBuffer, and PhD candidate at the School of Media and Communication, RMIT University. Jonathan has a particular interest in design, embodied interaction, and the psychology of using digital environments. His creative work is informed by a broad range of research in 3D graphics technology, interaction design, physical computing, interactive architecture and new electronic materials that bring to the fore unprecedented scope to modify computer user experiences in engaging and innovative ways.

Associate Professor Peter H. Wilson is an Associate Professor in Psychology at RMIT University. Over the past 12 years, he has coordinated a program of research in the field of motor and cognitive development, supported by a number of ARC grants. He has published numerous papers on movement dysfunction and, latterly, on virtual-reality based treatments for people with brain injury. He works closely with new media artists in pursuit of new approaches to augmenting therapy.

Endnotes

[1] http://www.nintendo.com/wii/what/controllers

[2] The term ecological refers to the view that behaviour or action can only be fully appreciated by understanding the nature of the interaction between the individual, the task at hand, and the structure of physical and social environment. Hence, alterations at any of these levels can impact performance; for example, the physical texture of an object can influence how a user can interact with it.

References

Barsalou, L.W. Grounded cognition. Annual Review of Psychology, 59, (2008), 617-645.

Brooks A. L., and Hasselblad, S., Creating aesthetically resonant environments for the handicapped, elderly and rehabilitation: Sweden, Proc. 5th Int. conference on Disability, Virtual Reality and Associated Technologies, Oxford (2004) 191−198.

Dourish, P., 2001. ‘Where the Action Is: The Foundations of Embodied Interaction’ (Cambridge, Massachusetts, MIT Press, 2001), 17-22.

Duckworth, J., Wilson, P., Mumford, N., Eldridge, R., Thomas, P., and Shum, D. ‘Elements: Art and Play in a Multi-modal Interactive Workspace for Upper Limb Movement Rehabilitation’, Proceedings of the 14th International Symposium on Electronic Art, (2008), Singapore, 25 July-3 August

Esbensen, A.J., Rojahn, J., Aman, M.G. and Ruedrich, S., ‘The reliability and validity of an assessment instrument for anxiety, depression and mood among individuals with mental retardation’, Journal of Autism and Developmental Disorders, (2003) vol. 33, pp. 617–629.

Fortune, N., Wen, X., “The definition, incidence and prevalence of acquired brain injury in Australia”, Australian Institute of Health and Welfare: Canberra, (1999) 143.

Gaggioli, A. Meneghini, F. Morganti, M. Alcaniz, and G. Riva., ‘A strategy for computer-assisted mental practice in stroke rehabilitation,’ Neurorehabilitation & Neural Repair, vol. 20, (2006), 503-507.

Garbarini, F., Mauro, A., ‘At the root of embodied cognition: Cognitive science meets neurophysiology.’ Brain and Cognition. 56. Amsterdam, Netherlands: Elsevier Inc, 2004, 100-106.

Gibson, J.J., The senses considered as perceptual systems (Boston: Houghton Mifflin, 1966)

Glenberg, A.M. & Kaschak, M.P. Grounding language in action. Psychonomic Bulletin and Review, 9, (2002), 558-569.

Green, C. S., Bavelier, D. ‘The Cognitive Neuroscience of Video Games’, in Digital Media: Transformations in Human Communication (New York; Oxford, 2004), 211-224

Holden, M.K., 2005. ‘Virtual Environments for Motor Rehabilitation: Review.’ In CyberPsychology & Behavior. 8 (3). NY: Mary Ann Liebert, Inc, (2005), 187.

Ishii, H. and Ullmer, B., ‘Tangible bits: towards seamless interfaces between people, bits and atoms’ SIGCHI conference on Human factors in computing systems, Atlanta, Georgia, United States, 1997.

Krueger, M.W. Artificial Reality II. (Addison-Wesley Publishing Company, Reading, Mass., 1991)

Malone, T. W., & Lepper, M.R. (1987). ‘Making learning fun: A taxonomy of intrinsic motivations for learning’ In R. E. Snow & M. J. Farr (Eds.). Aptitude, learning and instruction. Volume 3: Cognitive and affective process analysis. Hillsdale, NJ: Lawrence Erlbaum (1987).

Mandler, J. (1992). How to build a baby: II. Conceptual primitives. Psychological Review, 99, 587-604.

Massumi, B. Parables for the Virtual: Movement, Affect, Sensation (Durham & London: Duke University Press, 2002), 1.

McCrea, P.H., Eng, J.J., Hodgson, A.J., ‘Biomechanics of reaching: clinical implications for individuals with acquired brain injury.’ Disability & Rehabilitation, London, Informa Healthcare, 24(10), (2002), 534 – 541.

McLuhan, E. and Zingrone, F., (eds), Essential McLuhan. (Toronto: Anansi, 1995), 119-120,

Platz, T., S. Hesse, and K.H. Mauritz., Motor rehabilitation after traumatic brain injury and stroke – Advances in assessment and therapy. Restorative Neurology and Neuroscience, 14, (1999), 161–166.

Reid, D.T., ‘The benefits of a virtual play rehabilitation environment for children with cerebral palsy on perceptions of self-efficacy, a pilot study’, Paediatric Rehabilitation, vol. 5, no.3, (2002), 141–148.

Rizzo, A. A., Schultheis, M. T., Kerns, K. A., and Mateer, C., ‘Analysis of assets for virtual reality applications in neuropsychology’, Neuropsychological Rehabilitation, vol. 14, (2004), 207-239.

Rose, F.D. Virtual reality in rehabilitation following traumatic brain injury, European Conference on Disability, Virtual Reality & Associated Technology, ECDVRAT, Maidenhead, UK, 1996.

Shum, D., Valentine, M. and Cutmore, T. ‘Performance of Individuals with Sever Long-Term Traumatic Brain Injury on Time-, Event-, and Activity-Based Prosepctive Memory Tasks’, Journal of Clinical and Experimental Neuropsychology, 21 (1), (1999), 49-58.

Wilson, P.H., Thomas, P., Shum, D., Duckworth, J., Guglielmetti, M., Rudolph, H., Mumford, N., and Eldridge, R., ‘A multilevel model for movement rehabilitation in Traumatic Brain Injury (TBI) using Virtual Environments’, 5th International Workshop on Virtual Rehabilitation, New York, August 29-30, 2006.

Wilson, P.H., Duckworth, J., Mumford, N., Eldridge, R., Guglielmetti, M. Thomas, P., Shum, D., and Rudolph, H., ‘A virtual tabletop workspace for the assessment of upperlimb function in Traumatic Brain Injury (TBI)’, 6th International Workshop on Virtual Rehabilitation, Venice, September 27-29, 2007.

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 3.0 Australia.

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 3.0 Australia.